Practical Guide to MCP Server Development — Transforming Internal APIs and DBs into a Professional Domain Agent

After reading this article, you will be able to personally run code that exposes your internal REST API or DB schema to an MCP server to instantly transform a general-purpose LLM into a domain-specialized agent.

General-purpose LLMs are powerful but have a fatal limitation: they lack knowledge of our company's internal API structure, proprietary database schema, and internal business terminology. To bridge this gap, we have so far attempted manual drafting of lengthy system prompts, separate configuration of RAG pipelines, or fine-tuning. However, all of these methods entail a heavy maintenance burden and are vulnerable to real-time data.

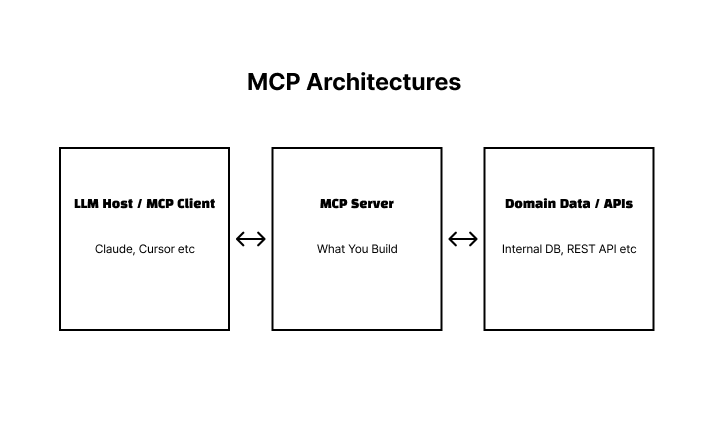

The Model Context Protocol (MCP) is a standardized server-client protocol that allows agents to obtain external context at runtime without modifying the LLM. The moment you build your own server, a general-purpose LLM transforms into a specialized agent for your organization. This article covers the process step-by-step, from core concepts to practical implementation using Python/TypeScript SDKs.

Key Concepts

MCP Architecture: Client-Server-Transport

MCP consists of three layers.

| Layer | Role | Example |

|---|---|---|

| MCP Client | Built into LLM host, communicates with server | Claude Desktop, Cursor, Custom Agents |

| MCP Server | Expose domain data via standard interface | Custom build part |

| Transport | Client-Server Communication Methods | stdio (Local), SSE (Remote HTTP) |

The internal communication protocol is JSON-RPC 2.0. All requests and responses are serialized in JSON format and flow over Transport.

3 Key Primitives

There are three basic units that an MCP server can expose to clients.

| Primitive | Description | Initiative | Example |

|---|---|---|---|

| Tools | Functions that LLM can call | LLM (Called by model's decision) | API lookup, DB query, file saving |

| Resources | Data provided as context | Client (requested by user or app) | DB schema, configuration file, documentation |

| Prompts | Reusable prompt templates | User | Slash commands, workflow starting points |

Difference between Tools and Resources: Tools are actively called by the LLM when it decides, "I need to use this function now." Resources are closer to passive data sources that provide context in advance. Design as Resources if domain data changes frequently, and as Tools if actions are required.

Transport Selection: stdio vs SSE

# stdio 방식 (로컬 프로세스)

claude-desktop ──stdin/stdout──> python mcp_server.py

# SSE 방식 (원격 HTTP)

agent ──HTTP GET /sse──> https://mcp.yourcompany.com| Method | Advantages | Disadvantages | Suitable Cases |

|---|---|---|---|

| stdio | Simple setup, no additional servers required | Single client, local limited | Development environment, personal tool |

| SSE | Multiple clients, remote access | HTTPS and authentication required, increased complexity | Team sharing, production deployment |

Certification/Authorization

The MCP specification added an OAuth 2.1-based authorization framework in its 2025 update. In the SSE (remote) method, security boundaries must be clearly defined by utilizing this mechanism or directly implementing Bearer tokens or mTLS. Since the stdio (local) method relies on OS process isolation, it is common to operate without additional authentication in development environments.

Practical Application

Python: Registering an Internal REST API as a Tool

This is an example of registering the team's issue tracker API as a tool so that the LLM can directly query it.

Python Asynchronous Pattern Guide: The code below uses asynchronous Python based on asyncio. If async def, await, and asyncio.run() are unfamiliar, please check the Python asyncio official documentation first.

pip install "mcp[cli]" httpx# server.py

import asyncio

import os

from mcp.server import Server

from mcp.server.stdio import stdio_server

from mcp import types

import httpx

app = Server("company-issue-tracker")

BASE_URL = "https://issues.yourcompany.com/api/v1"

def _auth_headers() -> dict:

token = os.environ["ISSUE_TRACKER_TOKEN"] # 환경 변수에서 토큰 로드

return {"Authorization": f"Bearer {token}"}

@app.list_tools()

async def list_tools() -> list[types.Tool]:

return [

types.Tool(

name="get_issue",

description="이슈 트래커에서 특정 이슈 상세 정보를 조회합니다",

inputSchema={

"type": "object",

"properties": {

"issue_id": {

"type": "string",

"description": "조회할 이슈 ID (예: PROJ-123)"

}

},

"required": ["issue_id"]

}

),

types.Tool(

name="search_issues",

description="키워드로 이슈를 검색합니다",

inputSchema={

"type": "object",

"properties": {

"query": {"type": "string"},

"status": {

"type": "string",

"enum": ["open", "closed", "all"]

}

},

"required": ["query"]

}

)

]

@app.call_tool()

async def call_tool(name: str, arguments: dict) -> list[types.TextContent]:

try:

async with httpx.AsyncClient() as client:

if name == "get_issue":

resp = await client.get(

f"{BASE_URL}/issues/{arguments['issue_id']}",

headers=_auth_headers()

)

resp.raise_for_status()

return [types.TextContent(type="text", text=resp.text)]

elif name == "search_issues":

params = {"q": arguments["query"]}

if "status" in arguments:

params["status"] = arguments["status"]

resp = await client.get(

f"{BASE_URL}/issues/search",

params=params,

headers=_auth_headers()

)

resp.raise_for_status()

return [types.TextContent(type="text", text=resp.text)]

else:

return [types.TextContent(

type="text",

text=f"오류: '{name}'은 지원하지 않는 Tool입니다."

)]

except httpx.HTTPStatusError as e:

return [types.TextContent(

type="text",

text=f"API 오류 ({e.response.status_code}): {e.response.text}"

)]

except Exception as e:

return [types.TextContent(type="text", text=f"예상치 못한 오류: {str(e)}")]

async def main():

async with stdio_server() as streams:

await app.run(*streams, app.create_initialization_options())

if __name__ == "__main__":

asyncio.run(main())| Code Point | Description |

|---|---|

os.environ["ISSUE_TRACKER_TOKEN"] |

Load API tokens from environment variables. Hardcoding them in code causes security incidents |

try/except Entire block |

Returns all errors occurring during Tool execution to TextContent. Throwing an exception as is will halt the entire agent pipeline. |

@app.list_tools() |

Declare the list of tools provided by this server. This information is used to determine which tools the LLM can use. |

inputSchema |

Defines parameter types and required values in JSON Schema format. Guides the LLM to call in the correct form |

To use in Claude Desktop, register in ~/.claude/claude_desktop_config.json:

{

"mcpServers": {

"issue-tracker": {

"command": "python",

"args": ["/path/to/server.py"],

"env": {

"ISSUE_TRACKER_TOKEN": "your-token-here"

}

}

}

}TypeScript: Providing PostgreSQL Schemas as Resources in Real-Time

This is a pattern that provides a Resource to ensure that the LLM always references the latest schema information when generating SQL.

pnpm add @modelcontextprotocol/sdk pg

pnpm add -D @types/pg tsxTo use top-level await in TypeScript, package.json requires "type": "module":

{

"type": "module",

"scripts": {

"start": "tsx server.ts"

}

}// server.ts

import { Server } from "@modelcontextprotocol/sdk/server/index.js";

import { StdioServerTransport } from "@modelcontextprotocol/sdk/server/stdio.js";

import {

ListResourcesRequestSchema,

ReadResourceRequestSchema,

} from "@modelcontextprotocol/sdk/types.js";

import { Pool } from "pg";

const server = new Server(

{ name: "db-schema-provider", version: "1.0.0" },

{ capabilities: { resources: {} } }

);

const pool = new Pool({ connectionString: process.env.DATABASE_URL });

// 사용 가능한 Resource 목록 반환

server.setRequestHandler(ListResourcesRequestSchema, async () => {

const { rows } = await pool.query(`

SELECT table_name

FROM information_schema.tables

WHERE table_schema = 'public'

ORDER BY table_name

`);

return {

resources: rows.map(row => ({

uri: `db-schema://${row.table_name}`,

name: `${row.table_name} 테이블 스키마`,

mimeType: "application/json",

description: `${row.table_name} 테이블의 컬럼 구조 및 타입`

}))

};

});

// 특정 Resource 내용 반환

server.setRequestHandler(ReadResourceRequestSchema, async (request) => {

const tableName = request.params.uri.replace("db-schema://", "");

const { rows } = await pool.query(`

SELECT

column_name,

data_type,

is_nullable,

column_default

FROM information_schema.columns

WHERE table_schema = 'public' AND table_name = $1

ORDER BY ordinal_position

`, [tableName]);

return {

contents: [{

uri: request.params.uri,

mimeType: "application/json",

text: JSON.stringify({ table: tableName, columns: rows }, null, 2)

}]

};

});

// 종료 시 DB 연결 풀 정리

process.on("SIGINT", async () => { await pool.end(); process.exit(0); });

process.on("SIGTERM", async () => { await pool.end(); process.exit(0); });

const transport = new StdioServerTransport();

await server.connect(transport);The key to this pattern is that it queries the actual schema from the DB at runtime. Even if the schema changes, the LLM always receives the latest information without needing to modify the MCP server code.

DB Connection Pool: This is a method of creating and reusing multiple connections in advance instead of opening and closing a new DB connection for every query. It is advantageous for performance and stability, and Pool from the pg library is the de facto standard for PostgreSQL connection management in Node.js.

Pros and Cons Analysis

Advantages

| Item | Content |

|---|---|

| Standardized Interface | Reuse of the same server across all MCP-supporting clients such as Claude, Cursor, Zed, etc. |

| LLM Independence | Server code is not dependent on the LLM vendor. No server modifications required when transitioning from Claude to GPT-4o |

| Real-time Context | Inject actual data at runtime instead of a fixed prompt |

| Clear Security Boundaries | Server explicitly controls which tools and data are exposed |

| Multi-Agent Reuse | A single MCP server can be shared across multiple agent pipelines |

Disadvantages and Precautions

| Item | Content | Response Plan |

|---|---|---|

| Authentication/Authorization Management | Developer is responsible for authentication implementation in SSE | Utilize MCP OAuth 2.1 or apply Bearer tokens/mTLS |

| Increased Latency | Actual API/DB request occurs with every tool call (trade-off for real-time data) | Response caching, timeout settings |

| Version Management | Existing Client Compatibility Issues When Changing Tool Schema | Utilizing Version Naming and Deprecation Flags |

| Error Propagation | External service failure affects all agents | Graceful return with TextContent, Circuit Breaker Pattern |

The pattern that returns an error as TextContent is implemented as follows:

# 나쁜 예 — 예외가 던져지면 에이전트 파이프라인 전체가 중단된다

raise ValueError("API 호출 실패")

# 좋은 예 — LLM이 상황을 인지하고 다음 행동을 스스로 결정한다

return [types.TextContent(

type="text",

text="오류: API 호출 실패 (503). 잠시 후 다시 시도하세요."

)]Circuit Breaker: A pattern that blocks requests for a certain period of time when an external service repeatedly fails, preventing the failure from spreading to the entire system. It can be implemented using Python's tenacity and pybreaker libraries.

The Most Common Mistakes in Practice

- Neglecting the

description— If the Tool'sdescriptionand parameter descriptions are inadequate, the LLM will call the wrong tool or pass incorrect parameters. Descriptions are API documentation that the LLM reads. - Throwing Errors as Exceptions — If an exception is thrown when an error occurs during tool execution, the entire agent pipeline is halted. Error situations should also be designed to return a meaningful message via

TextContentso that the LLM recognizes the situation and responds. - Cramming all tools into a single server — Concentrating unrelated tools on a single MCP server reduces the accuracy of LLM tool selection. It is recommended to separate servers by domain and register only the necessary servers to the client.

In Conclusion

MCP is a protocol that "packages domain knowledge into code and provides it to LLMs via a standard interface," and the moment you build your own server, general-purpose AI transforms into a specialized agent for your organization.

3 Steps to Start Right Now:

- Install MCP SDK and Run Hello World Server — Verify stdio server operation using the official quickstart example after

pip install "mcp[cli]" - Register the most frequently used internal API as a Tool — The key is to write

inputSchemaanddescriptionin sufficient detail. - After registering the server in Claude Desktop

claude_desktop_config.json, verify the tool call in an actual conversation.

Next Post: Covers how to implement team-based production deployments by containerizing MCP servers with Docker and switching to SSE Transport.